New 3D IPUs Go for “WoW Factor” with TSMC’s Wafer-on-Wafer Technology

Graphcore has revealed new intelligent processor units (IPUs) based on 3D wafer-on-wafer (WoW) technology. Next up for the company: an AI computer system.

Artificial intelligence (AI) capabilities, such as computer vision and conversational interfaces, have become a lynchpin in various industries: telecommunications, financial services, healthcare, pharmaceuticals, and many others. These AI-based algorithms help companies increase revenue when applied in inventories, part optimization, demand forecasting, customer interaction, and marketing. To manage these massive amounts of data, many providers are searching for high-performance computing systems that can handle AI workloads efficiently.

One company offering a solution is Graphcore, which recently uncovered its 3D wafer-on-wafer (WoW) processor, the Bow IPU (intelligent processing unit). Graphcore claims the IPU delivers up to 40% higher performance and 16% better power efficiency for real-world AI applications than previous generations of IPUs at the same price—with no requirement to change existing software.

Bow processors. Image (modified) used courtesy of Graphcore

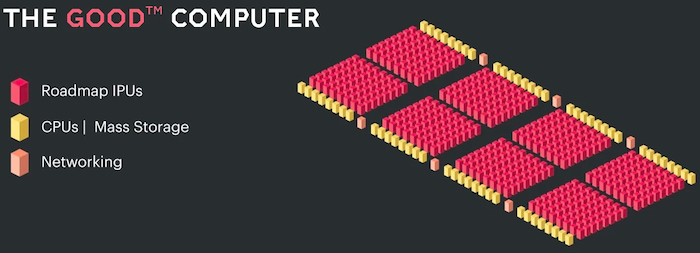

Graphcore also teased its new AI computer: Good Computer, an "ultra-intelligent" AI computer that will be powered by a Bow IPU. The new AI computer aims to provide over 10 EXAFLOPS (EXA floating-point operations per second) of computing power.

TSMC Brings "WoW Factor" to the Table

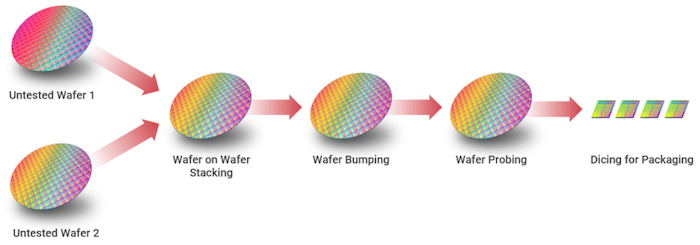

The Bow IPUs pack a significant performance boost and improved power efficiency, thanks to TSMC’s wafer-on-wafer (WoW) 3D technology. WoW technology involves two flipped wafers together, starting with the silicon level outside and continuing to the front end of the line and back end of the line. The wafers communicate via through-silicon vias (TSVs) for the I/Os. With this technology, big dies like CPUs and GPUs can achieve high computing performance and high memory bandwidth.

WoW packaging. Image used courtesy of TSMC

In a nutshell, the WoW packaging technology works by stacking layers vertically instead of placing them horizontally across the board. This approach allows more cores to be integrated into a single package. The communication with other modules is also very fast with minimal latencies.

In a Bow IPU, two wafers, one for AI processing and the other for power delivery, are bonded together to form a 3D die. Architecturally compatible with the GC200 IPU processor, the AI processing die includes 1,472 independent IPU-core tiles and can run more than 8,800 threads. It also supports 900 MB of in-processor memory.

The IPU integrates deep trench capacitors in the power delivery die near the processing cores and memory. The deep trench capacitors provide high capacitance density, and their placement enables a 40% increase in the performance of the IPUs.

Performance of the Bow IPUs

Graphcore's "Bow Pod" AI compuer systems are based upon the layers of Bow-2000 IPU Machines, with each machine containing four Bow IPUs capable of delivering 1.4 PetaFLOPS of AI computing. According to Graphcore, Bow Pods can deliver real-world performance at scale for a wide range of AI applications—from GPT and BERT for natural language processing to EfficientNet and ResNet for computer vision to graph neural networks, and many more.

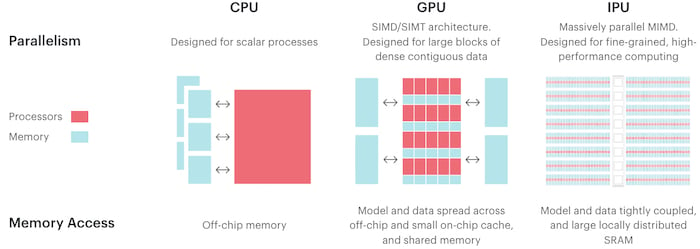

Comparison of CPUs, GPUs, and IPUs. Image used courtesy of Graphcore

The Bow Pod256 delivers more than 89 PetaFLOPS of AI compute, and the superscale Bow POD1024 features 350 PetaFLOPS of AI compute, allowing engineers to train the growing AI models.

Graphcore also claims that the Bow Pod16 provides five times better performance than the NVIDIA DGX A100 system at half the price. Moreover, when Graphcore tested Bow Pods across various AI workloads, they showed an improved performance-per-watt of up to 16%.

Graphcore's Bow Pod compared to an analogous offering from NVIDIA. Image used courtesy of Graphcore

Graphcore says its new IPUs deliver high performance without any changes to the software, which means programmers will notice no change in logic. All of this—with no price increase—results in low development costs, no learning curve for developers, and faster time to market.

The Good Computer

In addition to its Bow IPU announcement, Graphcore also recently revealed that it is developing a so-called ultra-intelligent AI computer it hopes will exceed the parametric capacity of the brain. The computer is named in the honor of Jack Good, who first theorized a machine that could someday exceed the capability of the human brain in his 1965 paper "Speculations Concerning the First Ultra-Intelligent Machine."

Key building blocks of the Good Computer. Image used courtesy of Graphcore

Graphcore reports that it is already developing the next generation of IPU technology that will power this AI computer. The Good Computer is designed to include:

- Over 10 EXAFLOPS of AI floating-point compute

- Up to 4 Petabytes of memory with a bandwidth of over 10 Petabytes/second

- Support for AI model sizes of 500 trillion parameters

The estimated cost of the computer is 120 million dollars.

Future of AI-capable Hardware

As AI models grow more complex, the demand for hardware to handle computation for such applications also heightens. Many companies including NVIDIA and Cerebras have plans for brain-scale computing hardware. Emerging semiconductor technologies from these companies will play a major role in achieving high levels of computational power.

Wafer-stacking technology presents many more opportunities than isolating power delivery; many wafers can be added for memory, processing, and power delivery for more density and performance. However, this technology may also face challenges related to thermal management. It's possible with the trend toward vertical integration and 3D chip stacking that Graphcore's multi-wafer approach may become more commonplace among next-generation processing units.