Marvell’s OCTEON 10: The First DPU To Use Neoverse N2 ARMv9 Architecture

Marvell’s newest 5 nm data processing unit (DPU), the OCTEON 10, offers 3x improvement over the previous generation along with the newest ARMv9 Core.

The modern workload of a data center is focused not on applications but on the parsing of data. The general-purpose processor of the past is no longer the optimal hardware for networking, AI, video, and storage.

The alternative is data processor units, or DPUs, first introduced in 2005 by Cavium (later acquired by Marvell). DPUs can be classified as highly reprogrammable, network-focused processors with inherent hardware accelerator functions.

Marvell has made a series of successively superior OCTEON generations starting in 2010 and began incorporating ARM cores in 2015. Adding to its OCTEON lineup, last week, Marvell announced a new family of DPUs, the OCTEON 10.

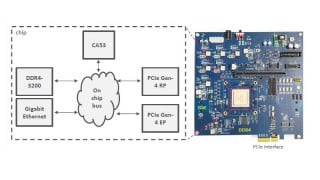

The OCTEON 10 DEV Platform will be available in Q4 2021. Screenshot used courtesy of Marvell

This article will attempt to break down the OCTEON 10 in terms of key functionality, looking at how the ARMv9 architecture is being incorporated into this DPU family. Finally, it'll cover the improvement of the Marvell DPU SoC over previous generations.

Key Features of the OCTEON 10 Family

The newest member of Marvell's DPU family, the OCTEON 10, claims to pack an incredible amount of functionality in a single SoC. According to Marvell, this DPU is the industry's first 5 nm DPU. This reduction in geometry can allow for a greater density of functions, along with a lower power profile.

However, it's not just the shrinking geometry that makes this DPU interesting. The SoC features inline functions for IPSec, vector packet processing, hardware-centric AI/ML inferences, and a host of high-speed interfaces, just to name a few.

The block diagram, as shown below, gives a glimpse into the capabilities of the OCTEON 10 family.

The OCTEON 10 data processing unit block diagram. Image used courtesy of Marvell

The other prominent feature said to be another industry first is the inclusion of the ARM Neoverse N2 core. What makes this core so revolutionary?

The Neoverse N2: Built on ARMv9

The Neoverse N2 is the first core built on the ARMv9 specification. ARMv9, unveiled earlier this year, is the first new revision of the ARM specification in a decade.

The main feature set for the Neoverse N2 core. Image used courtesy of ARM

The N2 states to bring numerous upgrades over the N1, including enhancements to power management, memory partitioning, security, scalability, and vector performance.

However, the N2 is the only one of several significant enhancements to the OCTEON lineup. So, what other leaps have the newest OCTEON DPU made?

Improving the Data Processing Unit

The OCTEON 10 brings new enhancements to the portfolio of solutions available from Marvell, including scaling from eight N2 cores up to thirty-six. This DPU is designed to enhance not just data centers but also enterprise systems and edge devices.

So far, this article has covered two significant features, which are said to be industry firsts, the 5nm process for the DPU and the N2 core. However, there are still two more firsts that Marvell attributes to the OCTEON 10: hardware-based vector packet processing and the inline hardware acceleration engines for machine learning and AI.

Before diving into specifications, it might be essential to consider what vector packet processing is? More specifically, how does it optimize network throughput?

What is Vector Packet Processing?

The basic principle behind vector packet processing is based on batch optimization. A series of packets are vectorized before being run through the network stack. The entire vector is processed at each node before moving onto the next one.

Aside from the advantages of batch processing itself, the VPP model is extensible. In which, adding plugins to the network stack for hardware acceleration should be simple.

The concept for vector packet processing. Image used courtesy of FD.oi

Next, this article will look at a brief overview of the ML/AI model in the OCTEON 10 from a high-level development point of view.

OCTEON's AI/ML Inferences

The integrated hardware-accelerated ML/AI core reduces the node transit of network data, which reduces latency and operates under 2 W.

This reduction is said to translate into a 100x improvement over software implementations of a given model.

The Marvell machine learning toolchain. Screenshot used courtesy of Marvell

VPP & ML-optimized hardware wrap up the key features. How does the OCTEON 10 stack up when selecting a DPU?

Scalability for Power and Performance

With scalability in mind, Marvell designed the OCTEON 10 to include a family of processors to select from. The OCTEON 10 comes in four different flavors, starting from the CN103xx to the DPU400.

The key specifications for each class of chip in the family can be seen below. Something to note, Marvell states that the power envelops of its newest DPU is 50% less than its previous generation DPU.

Breakdown of the different family members. Screenshot used courtesy of Marvell

The OCTEON 10 claims to have made significant improvements across the board, including more high-speed I/O channels, a higher base frequency, and enhanced compute potential. Additionally, the advanced network management technology, vector package processing, hardware-based ML/AI, and a scalable chipset will allow designers to select the right OCTEON 10 DPU for their needs.

The inclusion of the Neoverse N2 ARMv9 core is as cutting-edge and future-proof as technology can be, at this moment. Among a host of significant benefits, the N2 offers SVE2 extensions, along with built-in IPSec technology and secure domain virtualization in the trusted zone to protect application data from potentially malicious code.

With a focus on data centers being a hot topic, more innovations, especially those integrating the new ARMv9 architecture, are sure to be more prevalent in the near future.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin