Skin-Like Sensors and AI Offer (Surprisingly) Accurate Hand Gesture Recognition

Scientists in Singapore have developed an AI system that recognizes hand gestures by combining wearable skin-like sensors with computer vision.

Human hand gesture recognition systems have come a long way over the last decade. Today, the technology lends itself to many cutting-edge applications, such as surgical robots in the healthcare field. However, the precision of gesture recognition can be hampered by the low quality of data that arrives from wearable sensors, often due to how large they are and the poor contact that they have with the person wearing them.

Now, scientists from Nangyang Technological University, Singapore (NTU Singapore) say they’ve developed an artificial intelligence (AI) system that’s capable of recognizing hand gestures by combining stretchable skin-like strain sensors with computer vision technology.

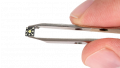

The transparent, stretchable strain sensor is shown here adhering to the wearer’s skin. In the images on the screen, the sensor cannot be seen at all. Image used courtesy of NTU Singapore

A “Bioinspired” Data Fusion System

To tackle this challenge, the NTU Singapore team has created a “bioinspired” data fusion system that utilizes skin-like stretchable strain sensors in conjunction with an AI system. Together, these technologies resemble the way that the skin's senses and vision are handled together by the human brain.

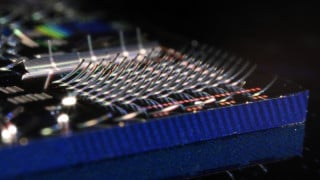

The bioinspired system, which is explained in more detail in Nature Electronics, is made up of three neural network approaches. A convolutional neural network, which is a machine learning network for early visual processing, a multilayer neural network for somatosensory information processing, and a spare neural network that fuses the visual and somatosensory information together.

The learning framework inspired by bio-inspired, somatosensory-visual responses. Image (modified) used courtesy of Nature Electronics

Combined, this creates a system that is able to more accurately and efficiently recognize human gestures.

Stretchable Strain Sensor

To capture reliable sensory data, the NTU Singapore team fabricated a transparent, stretchable strain sensor made out of single-walled carbon nanotubes. The sensor, which is flexible and can adhere easily to the skin, cannot be seen in photographs.

Flow diagram for somatosensory-visual data collection. Image (modified) used courtesy of Nature Electronics

This, the researchers say, is a major improvement in contrast to rigid wearable sensors that cannot make sufficient contact with the skin for accurate data collection. Because the Singapore team’s sensors sit flush with the skin, the device has high-quality signal acquisition, vital for high-precision recognition.

Guiding a Robot Through a Maze

As a proof of concept, the team tested their bioinspired AI system by controlling a robot and guiding it through a maze with only hand gestures. This hand gesture recognition system guided the robot through the maze with zero errors, compared to six recognition errors made by recognition systems based on vision alone.

The accuracy of the team’s AI system was maintained even when it was tested under poor conditions, such as high levels of background noise and poor lighting. In the dark, the system achieved recognition accuracy of almost 97%.

NTU Singapore scientists combined computer vision and skin-like electronics to guide a robot through a maze. Image used courtesy of NTU Singapore

The research team is now exploring the possibility of building a VR and AR system based on their AI system in a variety of applications spanning healthcare, entertainment, and home-based rehabilitation.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin