Designing the Next Generation of Energy Efficient AI Devices with Spiraling Circuits

Machine learning allows computers to be “trained” using data, enabling better and more precise predictions regarding a given outcome.

This is made possible by artificial intelligence (AI), however, it requires a relatively large amount of electrical energy to carry out its critical function. As AI continues to get better by the day, its potential applications and use cases grow, and this is raising concerns about the impact it will have on climate change in the years to come.

Offering a potential solution to this challenge, researchers from the Institute of Industrial Science at the University of Tokyo have reportedly designed and built a specialized computer hardware for AI applications consisting of stacks of memory modules arranged in a three-dimensional spiral.

Stacking Resistive RAM Modules

The novel design involves stacking resistive random-access memory (RAM) modules with oxide semiconductor (IGZO) access transistor in this three-dimensional spiral. Having on-chip non-volatile memory placed close to the processor makes the process of machine learning a lot faster and more energy-efficient.

This is due to the fact that electrical signals need to travel a shorter distance in comparison to conventional computer hardware. Since training algorithms require many operations to run at the same time in parallel, stacking multiple layers is a natural step.

"For these applications, each layer's output is typically connected to the next layer's input. Our architecture greatly reduces the need for interconnecting wiring," says first author Jixuan Wu.

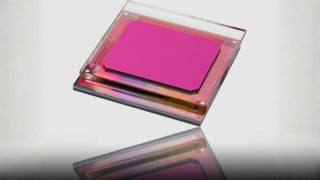

A product graphic image of the integrated 3D-circuit architecture for AI applications with spiraling stacks of memory modules. Image credit: Institute of Industrial Science, The University of Tokyo

Advancing Energy Efficiency

To make the device even more energy-efficient, the Tokyo-based research team implemented a system of binarized neural networks. Instead of having the parameters as any number, they are restricted as either +1 or -1. This massively simplifies the use of the hardware and compresses the amount of data for storage.

The device was tested by the research team for interpreting a database of handwritten digits. Results demonstrated that by increasing the size of each circuit layer, the algorithm’s accuracy could be enhanced by up to 90%. “In order to keep energy consumption low as AI becomes increasingly integrated into daily life, we need more specialized hardware to handle these tasks efficiently,” said research member Masaharu Kobayashi.

The research team is hopeful that their work will pave the way for the next generation of energy-efficient AI devices. According to them, it is a key step towards improving the IoT.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin