What Can Hackers Learn from Your Device’s Emissions? How Side Channel Attacks Thwart Cybersecurity

Side channel attacks can be used in a variety of ways to take advantage of unintended acoustic, mechanical, and electromagnetic signals to recreate data.

As we entrust more and more of our lives and information to connected devices, we see more and more cyber crimes committed. We often think of encryption as being our best line of defense—but what happens when the device, itself, is the vulnerability?

A side channel attack is a cryptographical attack that takes advantage of the emissions of a device using a cryptosystem rather than vulnerabilities in an algorithm or security system, itself. Side channel attacks can be used in a variety of ways to take advantage of unintended acoustic, mechanical, and electromagnetic signals to recreate data.

Van Eck phreaking was a method of eavesdropping first described in a publication in 1985 by Wim van Eck, a Dutch computer scientist. It recreated data using unintentionally emitted electromagnetic radiation from electronics—at the time, CRT televisions.

However, this form of digital espionage has a much longer history. During World War II, a Bell Telephone engineer noticed that an oscilloscope located in another part of the lab he was working in would spike whenever encrypted messages were sent on a teletype—and eventually realized the otherwise encrypted messages could be decoded into plain text just from the emissions the teletype was producing. This is one of the first known forms of side channel attacking in the digital world

This decoding method eventually evolved into TEMPEST espionage, which was revealed to be used by the NSA after documents were declassified in 2008, particularly a formerly secret document titled “TEMPEST: A Signal Problem”.

Today, side-channel and TEMPEST eavesdropping continues to be a vulnerability. Using electromagnetic, mechanical, and acoustic signals, it is possible to eavesdrop on what someone is looking at, what messages they are sending, or what their passwords are. Some have described TEMPEST as one of the greatest security threats in digital devices today since so few consumer devices go to any lengths to protect against it, yet all devices produce some sort of emission that allows it to occur. There is even a field of cybersecurity dedicated to this form of vulnerability: “Emission Security”.

It is an interesting and unintended side-effect of our digital lives. While some methods require quite a bit of technical knowledge to pull off, a determined attacker can possibly collect sensitive data.

Here are some examples of side-channel attacks in various devices.

Stealing AES Keys Using Less Than $300 of Equipment

Fox-IT, a high assurance security firm, recently demonstrated a near-field TEMPEST attack to acquire cryptographic keys in conditions that closely mimicked real-world scenarios.

Acquiring crytographic keys has been demonstrated in the past on asymmetrical encryption algorithms, of which the mathematical structure makes it possible to amplify the bit of interest. AES, however, is a symmetrical encryption method which does not have the same predictable structure.

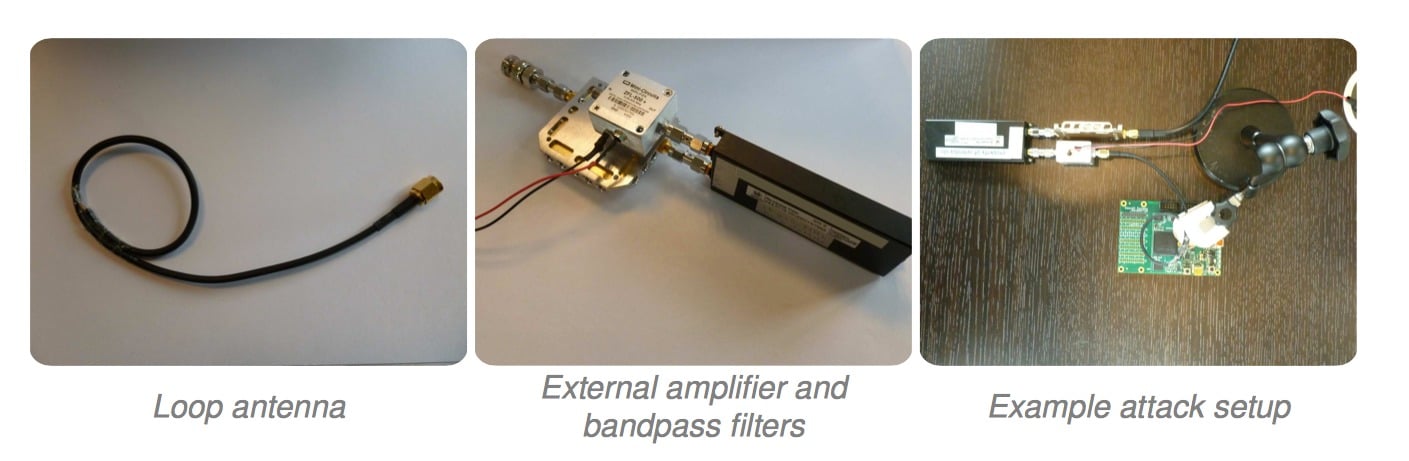

The team started by using some fairly simple tools—a loop antenna made from spare cable and tape, and cheap amplification hardware from a general hobbyist website. The team mentions that inexpensive recording hardware can also be used, including a USB dongle for less than $30, although it limits attack distance to a few centimeters.

Hardware required for TEMPEST attack. Image courtesy of Fox-IT.

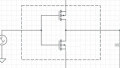

The demonstration is carried out on a SmartFusion2 FPGA with an ARM Cortex-M3 core, and AES-256 encrypted using OpenSSL. The recording begins, and it is determined that the processor has distinct stages based on its I/O and power consumption activity: Idle, I/O, Key Schedule, 14 rounds of encryption. This step has to be repeated in order to model the encryption activity.

Then, through correction and guessing, the key can be guessed within 8192 guesses, with each byte taking only a few seconds to guess. The team highlights that a regular brute-force attack would take 2^256 guesses and “would not complete before the end of the universe”.

Image courtesy of Fox-IT.

In the end, the team was able to discover the AES key from an electromagnetic leak and successfully carried out a TEMPEST attack in a trivial amount of time—all using inexpensive equipment! The leak stems from the AHB bus which connects the on-chip memory to the Cortex M3 core.

Acquiring Smartphone Password from Accelerometer Data

Smartphones have many built-in sensors, including gyroscopes and accelerators, which are useful in a variety of applications including gaming or navigation. However, researchers at the University of Pennsylvania also demonstrated that it is possible to use accelerometer data to guess smartphone passwords in side channel attacks.

The attack requires that the accelerometer data is being recorded, stored, or transmitted in some way, probably requiring a malicious app. However, once installed and acquired, gestures could be correlated to guess pins or unlocking motions.

The team tested their method on 24 users using PINS or patterns and took over 9,600 samples. Within five guesses, PINs were uncovered 43% of the time, and patterns 73% of the time for subjects that were not in motion. When motion was introduced, such as walking, the numbers were reduced to 20% for PIN guesses and 40% for pattern guesses.

The team highlights that sensor security in smartphones is lacking since a lot of information can be inferred from the data acquired—for example, even if an attacker might not have access to the physical phone, there is a possibility that a user might have the same PIN for their ATM card.

Open Source Software to Monitor Your Monitor

TempestSDR is an open source tool that, when paired with an antenna and an SDR (and accompanying ExtIO), can be used to virtually recreate images from a target monitor in real time.

A tutorial on RTL-SDR takes users step-by-step through the process of setting up the software and hardware. The demonstration is successfully used on a Dell monitor using a DVI connection, where the leaking emissions were picked up to produce a fairly clear image from within the same room. In another room, the images were still picked up but were much blurrier. The writer of the tutorial suggests a high gain directional antenna could probably produce clearer images.

Tests on HDMI monitors produced much weaker images since less unintended emissions were being leaked, and in AOC monitors, no emissions were being detected.

Despite how much time and effort is spent defending our devices from cyber attacks using security algorithms, it's still possible for things like EM leaks to give away vitally important information. What steps could designers make to prevent such emissions? We'll likely find out in the coming years as the tech industry as a whole tries to stay a step ahead of cybercriminals.

Feature image courtesy of RTL-SDR.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin

one thing that might be done is to deliberately broadcast noise on the side channels. i don’t mean white noise, or pink noise, or any type of statistical noise; instead, some extra circuitry could be included on each vulnerable device (the keyboard, the mouse, the motherboard, memory modules, etc.), which would be designed to generate RF signals that are similar to those of the device, and would be fed appropriate data streams from the computer itself. this circuitry and data wouldn’t actually affect use of the device, but the *emissions* from the circuitry would be going out at the same time that real use of the device was occurring and leaking data. an attacker would see a continuous stream of appropriate data at all times, making it much more difficult to pick out the data he wants.

and yes, this would incur extra expense, so not every user would want to do it, nor would those who hid their emissions necessarily need to protect every vulnerable channel, depending upon their circumstances. the most basic devices are the keyboard and mouse, so those would be the most-often protected devices, probably followed by the display. shielding could be incorporated into the designs of vulnerable devices, and apparently, in the case of some displays, already is to good effect, but data obfuscation could also be used. since two-way communication between the computer and an external device is in most cases already available, if not implemented, via USB, modern devices would most often be used instead of older non-USB devices, which would be less amenable to modification/redesign, and at base, would probably not be worth the effort. internal devices, like SIMMs, DIMMs, and the motherboard, can probably also be modified to add data noise, but i’m just going to talk about keyboards and mice here.

the keyboard could be fed a stream of data that can be generated by the keyboard (which would be locale-dependent); this data would pass through the extra circuitry and be “broadcast” to cloak real keystrokes. since it would not be known *when* a user would be hitting keys, some fake data would overlap real key hits, so the cloaking data would have to be broadcast so that its “keystrokes” also sometimes overlapped as well. to help hinder the data acquisition of the bad guys, there might be several such fake data streams, perhaps as many as 100 or so. the data could not be random, because if they were, statistical methods could be used by the bad guys to tease out the real keystrokes; the best source for “real” fake data is the real data in documents on the system disk or other persistent storage. a driver on the computer could gather appropriate data from documents in selected folders (into which *no* sensitive data could ever be placed) and send packets for each stream of fake data to the keyboard, which would then “broadcast” each stream independently of the others. since *all* data broadcast would have been generated on a keyboard, all would look real (with the inclusion of user-appropriate typos and corrections, which can also be collected from the users of a system), and it would be difficult to determine which, if any, was the real data stream.

pointing devices are quite different from a keyboard, and also different from one another; a mouse does not work like a touchscreen, and not all mice work the same way. thus, a different strategy must be used for each. there are not likely to be files containing appropriate data readily available, but there are other data sources, and appropriate data can easily be generated. in an office environment, there will be multiiple users, so the mice on each computer could have their raw data sent to a central server, where they could be sent out to other computers, and recorded as well; previously-recorded mouse data could be served up as well. because optical mice are essentially lo-res cameras that do image recognition to detect motion, photographs of the surfaces used to operate the mice (the desktop surfaces at each workstation) could have their resolution reduced, then fed to an algorithm that traces out random, but appropriate, paths across the surface; “appropriate” paths, in this case, could be determined by recording the data from a group of users, and should be fairly easy to generate. the data from these random paths (and clicks, etc.) could be fed to the mouse, again in multiple streams, so that they look realistic.

of course, this is just blue-sky stuff until somebody actually checks it out; it may be cheaper to shield the snot out of everything, or perhaps to encrypt every data channel. my thought is just that we shouldn’t make it easy for the bad guys to grab data.