Historical Engineers: How Claude Shannon Ushered In the Digital Age

Information theory laid the foundation for the modern computer. Where did Shannon get his inspiration?

Claude Shannon is perhaps one of the most influential minds in the field of electrical engineering and on the digital age at broad.

Shannon (born in Petoskey, Michigan on April 30th, 1916) developed an unwavering love for mathematics as he pursued higher education. He earned dual bachelor’s degrees in mathematics and electrical engineering from the University of Michigan.

Photograph of Claude Shannon. Photograph taken by Alfred Eisenstaedt and used courtesy of the Institute for Advanced Study

Claude made his first official foray into research and mathematics shortly thereafter. He studied differential equations alongside Vannevar Bush, using Bush’s own differential analyzer. His quest for knowledge surged following a 1937 summer internship with New York’s Bell Laboratories. He earned his master’s degree in electrical engineering and a doctorate in mathematics—together in three years at MIT.

Claude maintained a 31-year relationship with Bell Labs until 1972, during which he worked on missile control systems, became a visiting professor, and secured a permanent professorship in 1958. He became a professor emeritus two decades later.

The Thesis That Gave Rise to Modern Computers

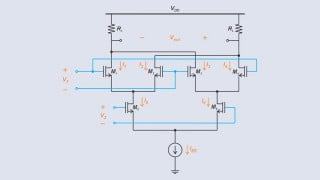

Shannon’s time in academia and onward accounted for his greatest technological contributions. The capstone thesis to his master’s degree, “A Symbolic Analysis of Relay and Switching Circuits,” brought immense notoriety with it. The paper outlined the theoretical foundations behind digital circuits using Boolean algebra. (For more information on the origins of Boolean algebra, see our past historical engineers article on George Boole.)

These circuits are essential to modern computing and telecommunications. Shannon applied binary arithmetic to electrical switches, asserting that certain relay arrangements could solve Boolean algebra problems. This emphasis on “computer logic” caught fire amongst peers.

On the telecommunications side, Claude devised methods of simplifying electromagnetic relay arrangements used in routing switches.

Bell Labs commonly used “human computers” to operate switchboards and other computational machines prior to these discoveries. These individuals had to manually find mathematical solutions using predefined procedures.

An operator finding mathematical solutions by hand. Image used courtesy of Bell Laboratory

This antiquated approach to programming preceded the digital era that Shannon helped usher in. Operators once had to rely on early computers, isographs, and integraphs to achieve similar results. These same operators gained access to modern computers just a few years after Claude Shannon’s findings were publicized.

The Birth of Information Theory

1948 brought more monumental advancements to the forefront. Shannon published “A Mathematical Theory of Communication,” building upon the work of predecessors like Harry Nyquist.

Nyquist’s earlier studies revealed that communication channels had maximum carrying capacities—or data transmission rates. He created formulas to measure these rates in a fluid system. Shannon drew upon this knowledge and the findings of another pioneer named R.V.L Hartley.

A Fresh Perspective

Information theory challenged conventional wisdoms regarding communications. Communications signals were previously interpreted in conjunction with their messages. Shannon argued for a separation of these components since a message’s meaning often isn’t proportional to its size. Proper communication should be contextually driven.

Claude’s information theory focused on two things: the logistics behind sending and receiving messages, plus handling any meaning contained within. However, intended recipients could never know the true meaning of messages if they weren’t delivered successfully. Shannon’s subsequent work would focus on communication channel reliability. Bell Labs was an excellent testbed for these experiments. He studied the following:

- Maximizing carrying capacity for existing channels (wires, cables, airwaves)

- Separating theoretical communications capacity from real-world capacity

- Uncovering bandwidths

- Dealing with dynamic interference (noise)

Shannon soon developed new equations for calculating channel bandwidth and noise—the latter measurement dubbed signal-to-noise ratio. He found that changing interference across a channel could influence signal capacity in real-time. Engineers could optimize communications channels accordingly using these bits of information. Under sub-optimal conditions, the challenge rested with squeezing every ounce of performance from noisy lines.

Diagram from Shannon's paper, "The Relay Circuit Analyzer." Image used courtesy of Bell Laboratory

Shannon demonstrated that noise couldn’t prevent a channel from reaching its theoretical signal capacity. His work proved that engineers could make improvements via encoding and system maintenance. This two-pronged approach was previously unknown.

Naturally, these advancements helped illuminate communication’s true value: the message itself. Operators learned how to ask the right questions, shelve the wrong ones, and target a high return-to-effort ratio. These communications endeavors blended engineering, mathematics, and logistics to transform technology in the coming decades.

An Influential Mind of the 20th Century

Claude Shannon’s contributions were truly groundbreaking. His theorems solved many problems related to digital communications and telecommunications. He made us rethink how numbers and signals could be used to tell a story—how mediums influence transmission.

Shannon's findings lead to the creation of the first chess-playing computer. Image used courtesy of MIT Museum and Bell Laboratory

Such developments paved the way for continuous communications, discrete communications, and others. They also intertwined engineering with the humanistic field of linguistics. This collaboration was in its infancy prior to Shannon's findings.

Today’s systems of encoding and decoding blossomed thanks to Shannon. Our modern systems as we know them have grown more intricate and capable as a result.

Information pulled from Bell Laboratories and George Markowsky (with Encyclopaedia Brittanica).

What are the direct or indirect impacts of Shannon's findings on your day-to-day design tasks? Which engineer would you like to see discussed next? Share your thoughts in the comments below.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin