In-Memory Computing May Solve the AI Memory Balancing Act of Volume, Speed, and Processing

Designing memory architectures for AI/ML devices may feel like an impassable compromise with storage volume, speed, and processing. A new in-memory computing accelerator may be a useful solution.

With the emergence of AI/ML, computing systems are faced with unprecedented memory challenges. AI/ML applications and devices are unique in that they require parallel access to huge amounts of data at the fastest speed possible and at the lowest power possible.

The memory wall has increasingly limited computing systems. Image used courtesy of the University of Washington

In this article, we’ll explore the demands being put on memory by AI/ML workloads and how engineers are starting to address these problems.

Demands for Volume

One of the reasons that AI is only emerging now is that it requires a lot of data. As a testament to this, Tesla has amassed over 1.3 billion miles of driving data to build its AI infrastructure while Microsoft needed five years' worth of continuous speech data to teach computers to talk.

Diagram of how Tesla's autopilot function "learns." Image used courtesy of Inside EVs

Clearly, to manage these huge amounts of data, memory systems are going to need to increase volume and scalability. Engineers have looked into simply adding larger memory systems, but this comes at the cost of degraded performance.

Designers have also looked into ways to store memory more efficiently using data lakes, which are centralized repositories that allow data to be stored either structured or unstructured at any scale.

Demands for Speed

As AI/ML applications are entering mission-critical applications that require real-time decision making, speed is of the utmost importance. Think of an autonomous car: if it can’t make decisions in fractions of a second, it can be a matter of life or death for the driver, pedestrians, or others on the road.

Dialog Semiconductor posits that the most affordable system is based on "a single chip SoC without any internal NVM." Image used courtesy of Dialog Semiconductor

Unfortunately, this requirement for speed directly conflicts with larger memory storage. The well-known memory wall of von Neumann architectures essentially holds that larger memory means slower memory. For this reason, engineers are considering breaking the mold of the von Neumann architecture with in-memory computing emerging as a new concept.

Demands for Power Efficiency

In direct contrast with the demand for speed is the demand for power efficiency. The first generation of AI-capable devices, like the Amazon Alexa, required devices to be plugged into an outlet because of the huge amounts of power consumption.

Now, the next generation is aiming for standalone, battery-powered devices, making power efficiency paramount.

Effects of data movement energy. Image used courtesy of Feng Shi et al.

From a conventional view, on-chip dynamic power consumption introduces a conflict between power and speed; the faster the system frequency, the higher the power consumption. More significant than this, however, is that Dennard scaling has caused data movement energy to be the biggest contributors to power consumption on-chip.

This reality conflicts with the large-volume demands of AI applications, requiring huge amounts of data to be moved. Once again, the concept of in-memory computing seems to be a solution to this problem.

Untether AI’s Memory Solution

Some companies and research groups, like Imec and Global Foundries, have side-stepped the von Neumann bottleneck by constructing AI chips with in-memory neural-network processing.

Others, like Untether AI, hope to address AI/ML conflicts of speed, storage, and power consumption by leveraging in-memory computation, as seen in the new tsunAImi accelerator.

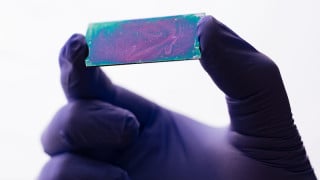

The new tsunAImi accelerator. Image used courtesy of Untether AI

This new accelerator offers some impressive specs: up to two PetaOperations per second (POPs) in a standard PCI-Express card form factor and power efficiency of 8 TOPs/W. These results, Untether AI claims, are three to four times faster than the nearest competitor (depending on the application).

At the heart of their in-memory computing architecture is a memory bank consisting of 385 KBs of SRAM with a 2D array of 512 processing elements. With 511 banks per chip, each device offers 200 MB of memory and operates up to 502 TOPs in its “sport” mode. For maximum power efficiency, the device offers “eco” mode for 8 TOPs/W.

The Future of In-Memory Computing

With AI here to stay, a central issue for many engineers will be how to overcome the unique and conflicting memory requirements. With in-memory computing being a potential solution, companies like Untether AI and Imec seem to be thinking in the right direction.

Do you have experience with memory architectures for AI/ML applications? What design challenges do you face? Share your thoughts in the comments below.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin