Memristor Prototype May Give AI Chips a Sense of Time

“Do you have the time?” With the University of Michigan’s latest memristor discovery, AI chips may soon note the sequence of events.

A University of Michigan research group has created a time-aware neural network leveraging new memristor technologies. While this technology is currently only realized on a small scale, its properties could lead to a major paradigm shift in AI.

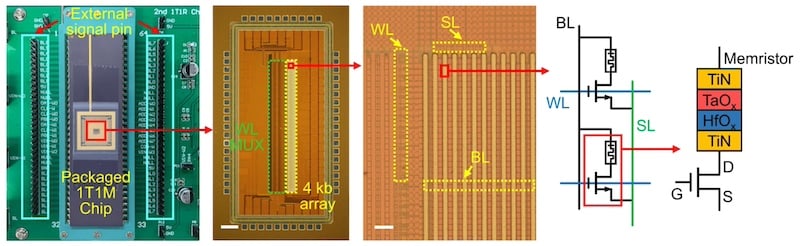

Packaged 1-transistor 1-memristor (1T1M) chip, an optical microscopic image of the memristor array, and the cell structure of the memristor. Image used courtesy of Nature

Compared to early neural networks such as Perceptrons, modern AI models go far beyond simple pattern recognition. The latest deployments, such as Copilot or GPT4, generate new material. This performance, however, consumes a considerable amount of power.

Finding Inspiration in Neural Relaxation Time

The researchers looked to neurons in the human brain to learn how they could replicate timekeeping in memristors, the hardware analog of neurons. Neurons encode information about when a sequence of events occurs through something called "relaxation time." Neurons receive electrical signals and send some on. The neuron will only send its own signals when it receives a certain threshold of incoming signals, and this threshold must be met in a certain timeframe. If too much time elapses, the neuron relaxes and releases electrical energy. Humans can understand when events happen and in what order because these neurons relax at different rates in our neural networks.

Up to this point, memristors have operated differently. When a memristor is exposed to a signal, its resistance decreases and allows more of the next signal to pass. More relaxation leads to higher resistance over time. The UM team's research, however, demonstrates that variations on a base material can yield different relaxation times, similar to natural variations in neurons' relaxation time, giving the memristor a timekeeping mechanism.

Researchers Tap the 'Kitchen Sink of the Atomic World'

Using an entropy-stabilized oxide (ESO), the UM memristors exhibited a time-dependent relaxation time that can be tuned from 159 to 278 ns. Time-dependent neuron activation can be programmed in hardware, removing the need for power-hungry GPUs when deploying a model.

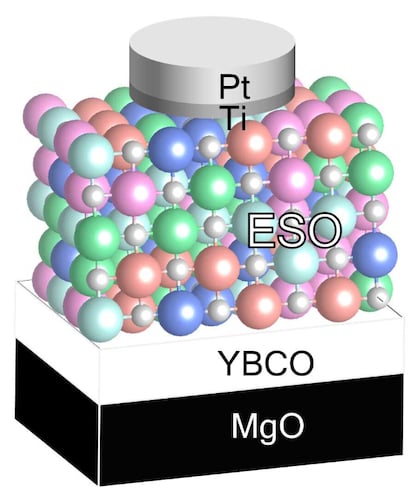

The ESO can have its relaxation time tuned by controlling the ratio of the oxide, enabling a programmable time-dependence in electrical neurons. Image used courtesy of the University of Michigan

The UM group developed this ESO using a yttrium, barium, carbon, and oxygen (YBCO) substrate, which exhibits superconducting properties below -292°F. One researcher on the project termed this type of entropy-stablilized oxide the "kitchen sink of the atomic world"; that is, the more elements the researchers added, the more stable it became.

After training, the device recognized the sounds of the numbers zero to nine—in many cases before the audio input was even complete—all the while maintaining a better operating efficiency compared to GPU-based systems. In the future, the team believes they can further improve the energy-intensive process used to make the device.

First Memristor With Timekeeping Behavior

In modern neural networks, GPU technology accomplishes much of the training and recognition. The GPU pulls known weights from memory, uses them for multiplication and accumulation, and sends them back to memory. This can be repeated any number of times, with the result being the model output. This approach works perfectly well for small models. As models become more advanced, the number of memory moves begins to highlight the weaknesses of the von Neumann architecture. Many researchers and developers are turning to compute-in-memory or hardware-enabled techniques to speed up this data transfer and reduce energy consumption.

The UM group is not the first to use memristors in AI and advanced computing. Many previous groups have explored new materials for compute-in-memory. The UM group is, however, the first group to show time-dependent behavior—something crucial to replicating how the human brain operates.

Enabling More Energy-Efficient AI Chips

While the UM group has no misconceptions about their tunable ESOs being commercially available soon, their research marks another step toward hardware-enabled AI performance.

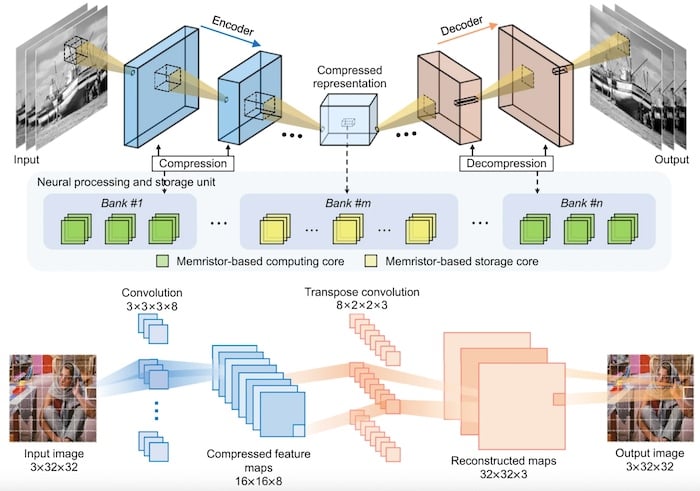

Schematic illustration of UM's memory-based cores in an in-memory processing system. Image used courtesy of Nature

If the memristive devices can leverage modern semiconductor techniques, their impacts on bespoke AI hardware solutions could be significant. The UM team estimates that their new material system could improve the energy efficiency of AI chips six times over current materials without changing time constants.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin