MIT Deep Learning Algorithm Passes an Important First Test

MIT has developed a deep learning algorithm that can "create" sounds so realistic, a human can't tell they're engineered.

MIT has developed a deep learning algorithm that has successfully passed the Turing Test for sound.

The Turing Test

The Turing Test was designed by Alan Turing as a way of measuring how good a machine is at imitating human behavior. Passing the test means that the difference between a machine and human is imperceptible.

A depiction of the classic Turing Test wherein a tester (C) is asked to determine whether A or B is human or machine. Image courtesy of Wikimedia.

In popular culture, the Turing Test is often portrayed as a computer attempting to seem as human as possible to the point where humans mistake it as one of their own.

However, this is only one application of the Turing Test, which has different types. For instance, some machines can mimic human sounds while other can visually compete with what a human can do— which is a large step toward autonomous vehicles.

MIT's Deep Learning Algorithm

MIT developed a deep learning algorithm capable of assessing physical interactions in videos and the sounds resulting from those interactions. The algorithm was trained over several months using over 1,000 videos with 46,000 sounds of objects being hit, scratched, or poked with a drumstick. The drumstick was used because it provided a consistent sound when hitting each object.

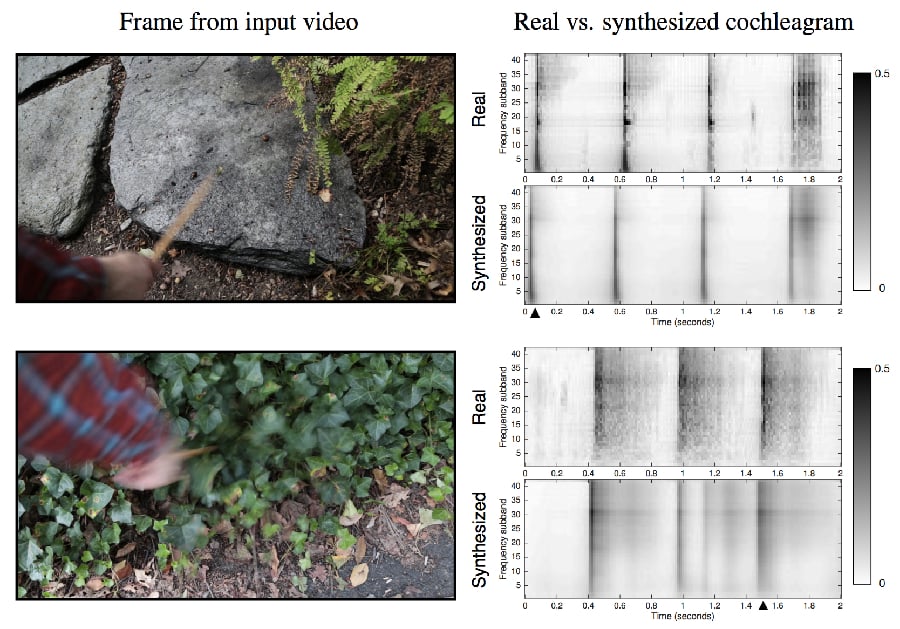

The algorithm was then fed muted video clips of a drumstick hitting various objects and instructed to produce sound effects appropriate for each video.

To accomplish this, the algorithm uses a parametric inversion to create the sounds rather than searching through a library of sounds to find the correct match for the video it sees. Once the algorithm has created a reasonable sound profile (or cochleagram), it finds a good match in the database and that is the output it provides.

Image courtesy of MIT CSAIL. Click to enlarge.

The dataset that was used, called The Greatest Hits, is now publicly available to others who may want to use it in their own experiments. The sounds are preprocessed by applying bandpass filters and creating an envelope for a simple representation of the filtered waveform. A representation is found for each frame and each frame is subsequently mapped to sound features.

The end result is that the sounds produced by the algorithm are almost identical to the sounds that would be produced by the natural interactions—and humans can’t tell the difference.

Testing

The MIT team used an online study to test how well the deep learning algorithm actually performs.

Testers were shown two videos of physical interactions: one with the actual sound and one with algorithm-produced sound. They were then asked to choose which sound was the "real", or authentic, soundtrack for each video.

The results of the study were that people chose the fake sound twice as often as the real sound. When MIT compared their algorithm to other similar algorithms, they found that it outperformed the best image matching method and baseline sound.

The algorithm was also tested using an image-based, nearest neighbor approach— specifically, the frame where the impact occurs. The audio was tested using the centered model for the loudness and the spectral centroids. The algorithm was also tested to see if it could determine impact events rather than having the impact centered.

Next Steps

The success of MIT's algorithm has far-reaching effects. One of the most significant takeaways is that the algorithm can accurately determine the properties of a material just by being fed video of it. This is a stride towards autonomous vehicles that can assess the materials around them. Being able to predict the sound can lead to being able to predict the response to interacting with something in the environment.

But the visual Turing Test is just one component of fully passing the Turing Test. There are many more complexities involved in machines trying to replicate human perceptions and actions.

MIT is currently developing an algorithm for passing the visual Turing Test, which is great for the machine learning community and is definitely a step towards having machines understand the consequences of interacting with different environments.

There are still other aspects of the Turing Test, such as having a machine try to replicate how humans would interact with each other, that are yet to be surmounted.

For now, however, the results MIT has achieved have many very useful applications to multiple different fields.

Watch the video explanation of MIT's processes here:

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin

You say “The results of the study were that people chose the fake sound twice as often as the real sound”. If you’ll excuse the pun, this definitely doesn’t sound right. Sure enough, the source article actually says “Subjects picked the fake sound over the real one twice as often as a baseline algorithm.”. Big difference! The new algorithm was twice as effective as the baseline algorithm, not twice as effective as the real thing!

People selected the sounds produces by the computer more often than real ones. Does it mean that the computer makes the same mistakes as the humans do? that the computer thinks like a human?