Researchers Get Ahead of Hardware Backdoors—By Creating Their Own

This article assesses how researchers have taken a preventative approach to security by creating their own hardware backdoors.

In a recent press conference, U.S. Attorney General William Barr demanded that “Apple and other technology companies help us find a solution so that we can better protect the lives of Americans and prevent future attacks.”

Barr issued this statement in response to a shooting on Pensacola Naval Base in December that left three dead. The FBI then demanded that Apple decrypt the shooter’s iPhone.

The shooter's severely damaged iPhone recovered by the FBI. Image used courtesy of the US Department of Justice

Although Apple cooperated, the tech giant expressed hesitance to backdoor future devices to simplify law enforcement's access to all devices: “We have always maintained there is no such thing as a backdoor just for the good guys.”

Although the debate on backdoors, national security, and user privacy is especially hot in the wake of the Pensacola shooting, it’s far from being a new one.

To paint a clearer picture of this conversation, it may be informative to review a few snapshots of moments when the ethics and feasibility of hardware backdoors were called into question. We'll do this by looking back on research in which engineers took a preventative approach to hardware backdoors by creating their own.

Proof-of-Concept Backdoor

At the 2012 Black Hat security conference in Las Vegas, researcher Jonathan Brossard presented a proof-of-concept backdoor that he claimed was “permanent” and “capable of infecting more than a hundred different motherboards.”

In addition, Brossard claimed that the malware—named Rakshasa after a humanoid demon in Hindu and Buddhist mythology—is essentially undetectable since it is built on free software like Coreboot, Seabios, and iPXE.

Brossard asserted that the goal of his proof-of-concept was to “raise awareness of . . . the dangers associated with the PCI standard” and “question the use of non-open-source firmware shipped with any computer.”

A diagram of IBM PC architecture, which Brossard claims is vulnerable to backdoors being embedded in the firmware. Image used courtesy of Jonathan Brossard

Rakshasa highlighted a poignant danger of hardware backdoors: that it can’t be removed by standard methods, like antivirus software.

Hardware Trojans in Intel Designs

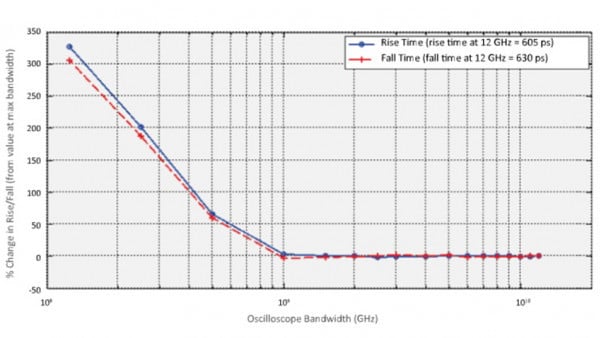

In 2013, researchers at the University of Massachusetts Amherst launched a project to develop hardware Trojans as a preventative approach to IC security: if they could think like an attacker, they could help manufacturers implement defensive measures against hardware Trojans.

Implemented below the gate level, the hardware Trojans were modified by “changing the dopant polarity of existing transistors.”

Georg T. Becker et al. explained, “Since the modified circuit appears legitimate on all wiring layers (including all metal and polysilicon), our family of Trojans is resistant to most detection techniques, including fine-grain optical inspection and checking against 'golden chips.'”

The team then inserted the Trojans into one of Intel’s digital post-processing devices, which included a random number generator, and in a side-channel resistant SBox.

Schematic of the Trojan-free (left) and Trojan AOI222 X1 gate (right). Image used courtesy of Georg T. Becker et al.

Georg T. Becker et al. concluded that the sub-transistor level Trojan could break any key from the random-number generator while avoiding detection from Intel’s functional testing procedure. It could also create a hidden side-channel by changing the power profile of two gates.

The researchers pointed out that “an evaluator who is not aware of the Trojan cannot attack the Trojan.” As such, they encouraged future researchers to etch detection and security at the silicon level.

Undetected Attack on Analog Circuits

Three years after the UMass Amherst research, another group of students and professors from the University of Michigan’s Electrical Engineering and Computer Science department won an award at the 2016 IEEE Symposium on security and privacy for a paper titled “A2: Analog Malicious Hardware.”

Like Rakshasa and the UMass Amherst hardware Trojan, A2 opened the gates for reverse engineering efforts against invisible backdoors.

Places in the typical IC design process that are susceptible to attacks. Image used courtesy of Kaiyuan Yang et al.

This time, though, the researchers modified a chip, so its capacitor drew a charge from wires as they transitioned between digital values. Once fully charged, the capacitors—remotely controlled by the attacker—flip-flopped, giving the attacker access to the device housing the modified chip.

The researchers expounded, “Once the trigger circuit is activated, payload circuits activate hidden state machines or overwrite digital values directly to cause failure or assist system-level attacks.”

The experiment, like the other hardware backdoors before it, successfully evaded detection.

Is Security the Real Price of Outsourcing?

The race to build smaller, more powerful transistors comes with a lofty price tag.

Because of the high cost of production, many semiconductor companies outsource chips to third-party companies. Unfortunately, this sometimes leaves many of these chips vulnerable to malicious hardware modifications.

As an example, Dr. Christopher Ashley Ford from the US State Department recently commented on the security implications of working with Chinese tech giants.

Although Chinese manufacturers have claimed their government cannot compel them to install backdoors in hardware architectures, Ford explains, “If the Party comes asking” for access to a Chinese company’s “technology...information…[and] networks… the only answer the company can give is ‘Yes.’”

As engineers like Jonathan Brossard and the university research teams continue to play the role of a hardware attacker, manufacturers can better anticipate weak spots in their designs and prioritize security, which, as we’ve discussed in the past, is not just a software problem.

What's your take on hardware backdoors? Is it a designer's responsibility to implement safeguards against such modifications? Or is it an inevitable risk that comes with outsourcing to third parties? Share your thoughts in the comments below.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin