Team-ups and Sensors—ADAS Ramps Up Sensing Solutions

One new type of sensor and two novel automotive processing approaches might be the key to limiting traffic accidents.

ADAS (advanced driver assistance systems) are digital electronic systems developed to help drivers navigate the roads more safely and with fewer accidents, crashes, and losses of life. These systems employ advanced sensors and processors that can give drivers assistive information or directly take an automatic action overriding the driver and preventing an accident.

Studies from multiple institutions, such as the one done by the Insurance Institute for Highway Safety, show that ADAS presents real-world results in decreasing traffic accidents, with many engineers working on improving current ADAS solutions to lower these numbers even further.

A graph showing vehicles equipped with different sensor systems for vehicle safety as well as future predictions. Image used courtesy of HLDI and IIHS

With the hopeful results of ADAS implementation in mind, this article focuses on three new developments in the automotive industry, from a new type of sensor to two collaborative projects in in-vehicle sensor processing for ADAS applications.

Allegro Creates a More Precise ADAS Motion Sensor

During this year's Sensors Converge Conference in San Jose, California, Allegro Microsystems unveiled two variants of a new type of position sensor named A33110 and A33115 developed for use in ADAS applications.

What makes these two chips unique is the combination of the company's vertical Hall effect technology (VHT) with its state-of-the-art tunneling magnetoresistance technology (TMR) into one single sensor package. According to Allegro, this solution would allow for superior heterogeneous and redundant sensors that feature higher resolution and accuracy compared to contemporary systems that use regular hall effect and giant magnetoresistance sensors.

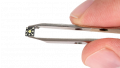

An example of a vertical hall element. Image used courtesy of Allegro Microsystems

Both ICs are 360-degree sensors featuring a primary (TMR) and secondary (VHT) transducer, each with independent digital signal processing and separate regulators and temperature sensors. In addition, the two system-on-chip (SoC) devices include onboard EEPROM capable of many read/write cycles for programming calibration parameters.

The A33115 differs from the A33110 as it features a turn counter for tracking motion in 90-degree increments, a low power mode, and a user-programmable duty cycle for further reducing power consumption during a key-off system condition. Both devices operate at a voltage range between 3.7 V and 18 V and can be directly connected to a vehicle's battery.

Allegro believes that its TMR and VHT technology could become the winning combination for the development of future automated and autonomous vehicles as suppliers and manufacturers are looking to improve their systems with smaller, more advanced, and more precise sensors.

Standardizing ADAS Sensor and Processor Communication

Earlier this year, OmniVision Technologies, the California-based digital imaging semiconductor company, and Valens Semiconductor, the Israel-based automotive and audio-video semiconductor company, announced a new partnership to develop MIPI A-PHY compliant solutions for ADAS applications.

Created by the Mobile Industry Processor Interface Alliance (MIPI), A-PHY is a high-speed communications standard for automotive interfacing systems (such as ADAS), released in September of 2020 and completely adopted by the IEEE in June of 2021. Many automotive companies such as Omnivision and Valens have adopted the A-PHY standard in developing next-generation in-vehicle sensor system solutions.

The first company to implement this standard was Valens themselves through the development of their VA7000 chipset family designed to extend CSI-2 (camera serial interface 2) sensors such as cameras, radars, and LiDARs. Combined with Omnivision's imaging sensors, these chips are intended to serve as the basis for the partnership between the two companies.

A block diagram for the VA7044/42 chipset. Screenshot used courtesy of Valens

According to the companies, this partnership will also include the 8.3-megapixel OX08B40 image sensor from OmniVision, featuring a high dynamic range of 140 dB with improved LED flicker mitigation performance.

In addition, the OX08B40 itself is a 4K resolution CMOS module with a 16:9 aspect ratio capable of capturing 36 frames per second. Created for ADAS and autonomous vehicle applications, this sensor is available in different color filter patterns to match the needs of the industry's machine vision applications.

Both companies are hopeful that this collaboration will enable their customers to evaluate the A-PHY standard and bring high-speed sensor-to-vehicle communication in future automobile ADAS and safety systems.

Going from ADAS to Automated Driving

In late June of this year, another collaborative project in ADAS was announced by two more companies, Israeli AI processor manufacturer Hailo and Japanese semiconductor company Renesas.

With Hailo's expertise in AI and Renesas' decades of experience in automotive semiconductor development, this new partnership hopes to deliver improved and cost-effective ADAS processing solutions. They aim to seamlessly scale ADAS into automated driving by combining the Hailo-8 processors with the Renesas R-Car V3H and V4H system-on-chips.

An example diagram showing the blend of a Renesas and Hailo solution. Image used courtesy of Hailo and Renesas

The Hailo-8 processor is an AI chip designed for edge devices (hardware that controls the data flow between the barrier of two networks). According to the company, its processor is more efficient and able to outperform other edge processor solutions at a significantly lower cost and physical size. Additionally, this IC is capable of up to 26 Tera operations per second (TOPS) with a typical power consumption of 2.5 watts.

On the Renesas side of the equation, its R-Car automotive SoC platform developed for intelligent and automated driving applications covers a wide range of uses from ADAS and autonomous driving to in-vehicle infotainment. The two chips used in the company's collaboration with Hailo, the R-Car VH3 and R-Car VH4, are processing SoCs for computer vision and image recognition through different types of sensors such as cameras, radars, and LiDARs.

With their partnership, the two companies claim to deliver the highest performance and efficiency in ADAS solutions enabling cost-effective vehicle system designs with an open software ecosystem design, scalable sensor integration, and fusion, maximizing innovation opportunities for manufacturers and suppliers.

Implementing ADAS Solutions for the Future of Automobiles

Ultimately the goal of ADAS is to improve the driving experience and increase vehicle safety. The growing popularity of these technologies and their entrance into legislation worldwide shows the importance of innovation in the automotive sensor and processor field.

Advancements like Allegro's new type of sensor, Valens and Omnivision's visual processing technology, and Renesas and Hailo's SoC solution can be seen as valuable examples of improvements on already familiar technologies. These advancements could help open the door for engineers and car manufacturers to improve ADAS and build autonomous systems for fully fledged self-driving vehicles.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin