Deep Learning the GPU Way

Here is a brief profile of deep learning technology and how GPUs are scoring early victories in this space.

Here is a brief profile of deep learning technology and how GPUs are scoring early victories in this space.

Image courtesy of NVIDIA.

Deep learning, which is a subset of machine learning technology, is rapidly moving into the mainstream designs at the intersection of GPU, FPGA, and DSP silicon building blocks. It's a technique that teaches a compute engine to carry out a task instead of programming it to do so.

Deep learning, rooted in the artificial intelligence technology, has a broad application in automotive, healthcare, imaging and security industries where it carries out tasks such as video analysis, facial recognition, and image classification. For instance, self-driving cars are a major beneficiary of the deep learning-enabled chips that monitor data from cameras, LiDAR and other sensors to recognize objects and subsequently react to specific situations.

The deep learning technology has evolved from neural networks, an effort to mimic human brain in terms of an intelligent processing system, but neural networks usually comprise of a single layer where data is fed, network weighs it, and the result is displayed. Deep learning has more layers and thus is capable of computing more complex scenarios like object detection and recognition.

The model training and inference stages shown in the deep-learning workflow. Image courtesy of NVIDIA.

A deep learning workflow is comprised of two stages. First, the compute-intensive process of training models involves extensive analysis of data sets and algorithms that accurately describe the nature of objects. The second stage—inference—is about the execution of the trained models in a way that algorithms are applied in real-time to identify real-world objects or situations for appropriate decision making.

The GPU Way

NVIDIA, a first-mover in the deep learning space, is now a market leader with GPUs that boast speed as well as massive computing power to execute intensive algorithms. The company's CEO, Jen-Hsun Huang, quoted deep learning adoption as the main factor in beating 2016's first-quarter targets.

Most neural network technologies, like computer vision and deep learning, run on GPUs because graphics-based accelerators are inherently more suitable for compute-heavy speech, image, and video processing applications.

Moreover, deep learning involves a lot of vector and matrix operations, so it runs faster on a GPU than a CPU. A GPU is designed to compute in parallel the same instructions. Next, a GPU boasts higher bandwidth to retrieve from memory and more computational units; a GPU can have thousands of cores.

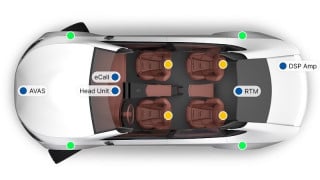

Deep learning is a key technology ingredient in self-driving cars. Image courtesy of NVIDIA.

However, while GPUs offer massive horsepower required for the compute-intensive process of training models, when it comes to executing these training models for real-time use, cases like facial recognition, it goes back to CPU clusters. Then, there are issues related to the power waste caused by CPU controlling the GPU.

NVIDIA has built a commanding position in the nascent deep learning market by providing modern GPU hardware for servers used for training models that differentiate between objects such as pedestrians, cars, cyclists, etc. Now the graphics chipmaker is catering to the second part—deep learning inference that executes the models—through Tesla M40 and its smaller companion M4 GPU hardware.

Meanwhile, other chip architectures, most notably DSPs and FPGAs, are catching up in the deep learning space, offering power, efficiency, and other advantages.

The second part of the series about deep learning will cover FPGAs' role in this burgeoning market.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin