Human Brain Interface and Iris Tracking Technology at CES 2018

At CES 2018, eye-tracking and human brain interface technologies were displayed.

Eye-tracking and human brain interface technologies continue to expand human-computer interactions. Here's a look at three types of technology that are changing the interface between humans and technology.

A Sensor for Internal Mindfulness and External Connectivity

The human brain has approximately 100 billion neurons. Whenever approximately 1 million fire simultaneously, the activity can be detected at the scalp.

Brain Robotics demonstrated a human-brain interface device at CES 2018 that uses temporal and retroauricular sensors to identify alpha, beta, and gamma brain waves that are associated with relaxed, alert, and focused states of mind. That information is then used to communicate with peripheral devices to provide feedback to users or control peripheral devices through application program interfaces.

At a CES press conference on Monday, a volunteer used the interface to control a robot that picked ping pong balls out of one box and moved them to another.

Image of a user of the Focus One brain-wave detector from Brain Robotics

Additionally, the technology can be used by individuals to track their focus levels when studying, or by teachers to track their student's attention-levels while learning.

A Responsive Prosthetic

Most prosthetic devices cost many tens of thousands of dollars. Brain Robotics has adapted their human brain interface technology to allow single and double-arm amputees to control actuators which allow users to interact with their world in a life-changing way.

While replacing the full dexterity of the human hand is likely still in the distant future, the company demonstrated the current state of their technology by allowing a double-arm amputee to grip a brush and write his native language of Chinese on-stage at CES.

Image of Brain Robotics affordable prosthetic courtesy of Brain Robotics.

Eye-Tracking Technology

Eye-tracking has been a field of interest for several industries over the last several years. It's been investigated by marketers and those developing beacon technologies because it can help figure out if someone is engaging with an advertisement. It's also part of the future of gaming as some companies are looking to use eye-tracking to let high-definition games be played on less powerful machines by providing high-definition graphics exactly where a player is looking and nowhere else (possibly greatly reducing processing demands).

Of course, it's also used for more important purposes. It's been considered for assisted driving to help determine how much attention a driver is paying to the road. Also, as revealed at CES this year, it can also be used in the identification of vision and healthcare problems via the RightEye EyeQ system.

People with limited or volatile control of their extremities can struggle with traditional computer interfaces—keyboards, mice, and touchscreens require a great deal of fine motor control that can be impinged upon by a variety of ailments. For those people who struggle to take advantage of traditional interfaces, Tobii.com has eye tracking solutions that, coupled with software, allow users to control their personal computers with only their eye movements.

Image of Tobii eye-tracking from Tobii.com

This technology can also be used by webpage designers, marketers, and gamers to track gaze while viewing static and moving displays. This allows researchers to better understand where subjects focus their attention in a design.

Image of Tobii Eye Sensor from Tobii.com

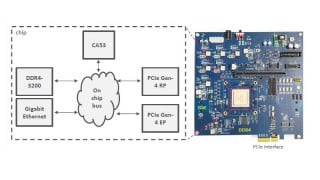

The Tobii sensor works in the near infrared range (NIR) of the electromagnetic spectrum to project NIR light on a subject's face and eyes and then record and interpret the reflections of the light with the Tobii EyeChip ASIC to determine eye-gaze point, eye positions, user presence, and fixation points. Coupled with the Tobii software, developers can create games, programs, and interactive advertisements that respond intuitively to a users gaze.

Tobii EyeChip ASIC courtesy Tobii.com

Summary

As technology continues to progress, innovators will continue to discover new ways to mend the gap between humans and computers. These technologies will continue to increase in ability and decrease in price. In the not too distant future, it's conceivable that computer interfaces are developed that utilize touchscreen, gaze-tracking, and gesture recognition to completely replace the decades-old computer mouse.

Facebook

Facebook Google

Google GitHub

GitHub Linkedin

Linkedin